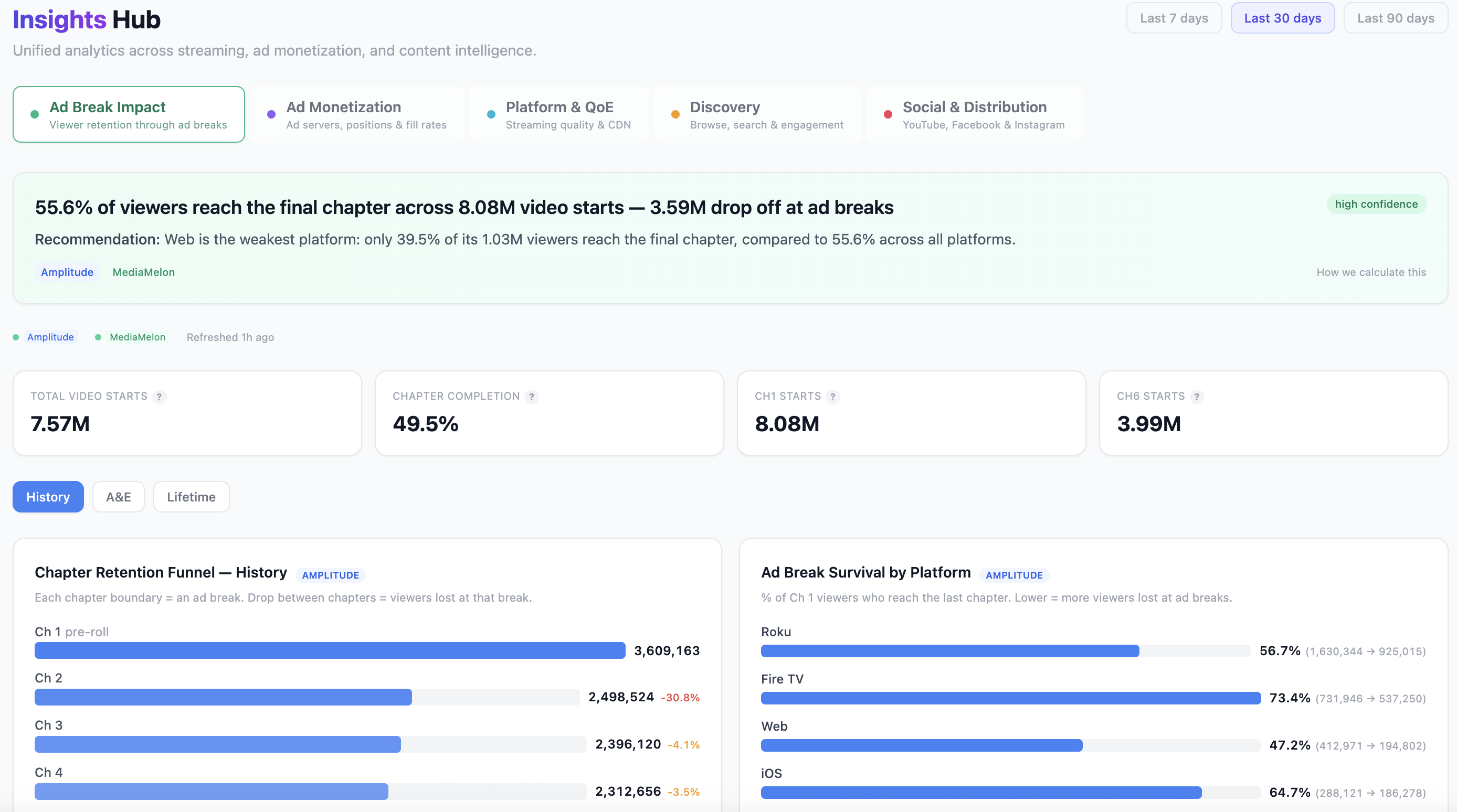

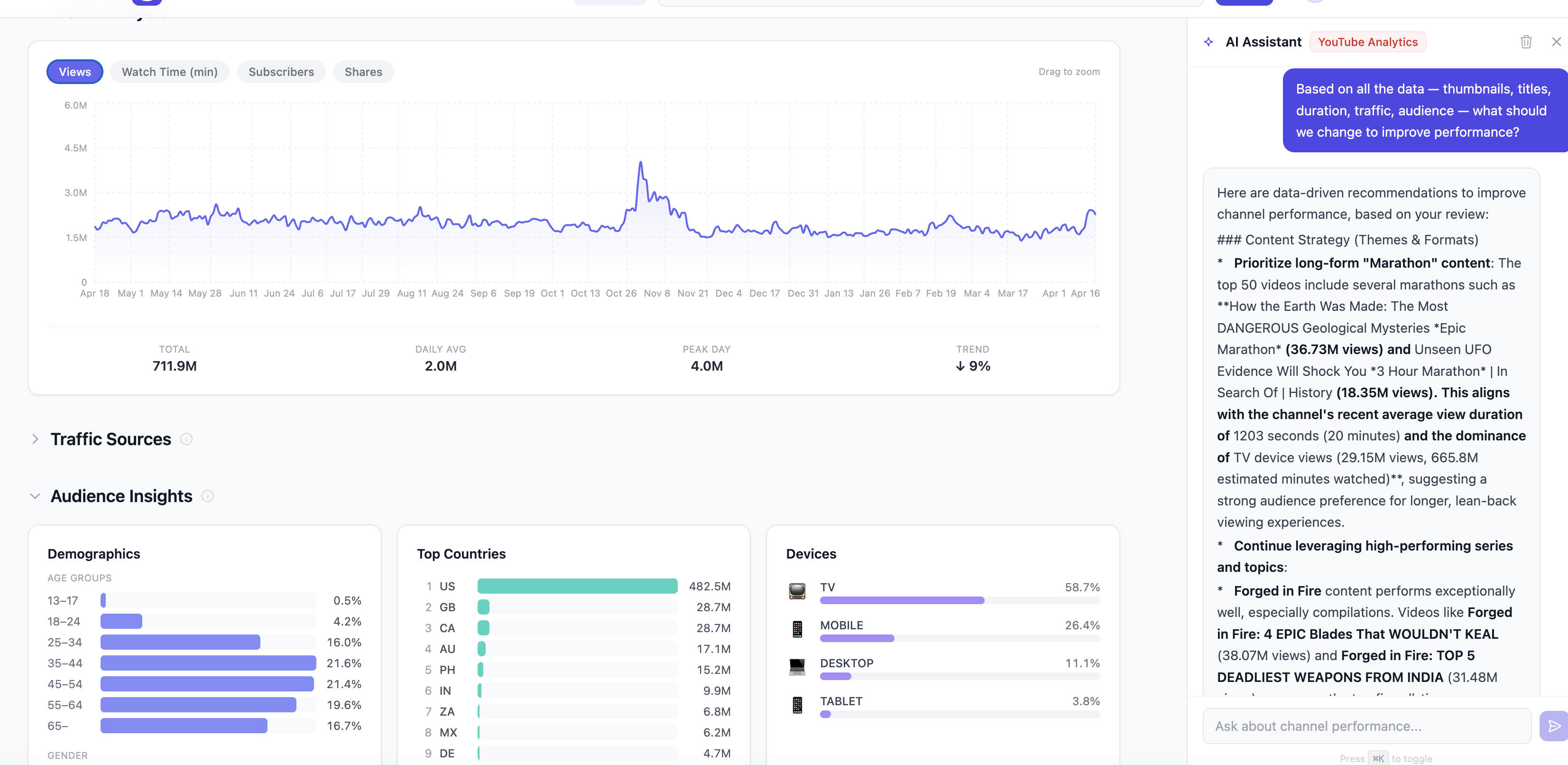

Prism is currently processing 60,000+ hours of broadcast content across multiple networks. The dashboards below are live production views — ad break retention funnels, cross-platform brand scorecards, and audience analytics powered by content intelligence.

These aren't static dashboards. An AI layer continuously synthesizes data across content attributes, audience behavior, platform performance, and revenue signals — surfacing insights no single data source could reveal. For example: Prism identified that product-testing scenes in a studio setting drive 2.4x higher subscriber conversion when clipped as Shorts — a pattern invisible to any single analytics platform. The analysis adapts as new content is processed and new audience data flows in.

From the social desk to the C-suite, Prism gives each team the intelligence they need to move faster and make better decisions.

Prism auto-identifies clip-worthy moments across your entire library, scored by engagement potential. Specify a creative direction — "dramatic contestant reactions," "satisfying technique close-ups," "hosts disagreeing on a verdict" — and get timestamped scenes ready for Reels, Shorts, and TikTok in seconds. Generate 5–15 episode microseries from a single prompt in under 90 seconds.

Natural language search that understands context, not just keywords. "Aerial shot of a coastal landscape at sunset" or "expert explains the science behind a technique" returns timestamped results with full metadata — who's on screen, what's in the frame, and exactly why each result matched. Audio fingerprinting identifies any clip's source episode in under 2 seconds.

Prism links talent appearances to audience behavior across every platform. See which hosts drive YouTube retention in specific demographics, whose segments get shared most on Instagram, and which talent combinations produce the highest engagement. Talent decisions move from intuition to evidence.

Product reviews, recommendations, and unboxing moments are high-trust content that drives purchase intent. Prism catalogs every product mention, brand appearance, and recommendation moment — creating a searchable index that links directly to e-commerce opportunities. Know exactly which episodes feature which products, with timestamps and context.

For membership-driven businesses, the question isn't just "what gets views" — it's "what converts." Prism connects content attributes (topics, talent, formats, techniques) to membership conversion events, revealing which types of content actually drive subscription decisions and long-term retention.

When you operate multiple editorial brands, differentiation is everything. Prism maps the content DNA of each brand — themes, talent, mood, production style — and identifies where brands overlap and where they're uniquely positioned. Audience data by brand shows who watches what, and where cross-pollination opportunities exist.

Prism's analytics dashboards connect content attributes to audience data across every platform. Each data source you connect unlocks questions no single system can answer — turning content intelligence into a growth strategy.

One multimodal analysis per scene produces a complete intelligence profile. No per-feature pricing. No separate APIs for transcription, faces, objects, and mood. Everything, simultaneously.

Face detection, identity enrollment, speaker diarization. Label a person once — recognized across the entire archive.

Shot type, camera movement, scene boundaries, lighting. Distinguish a close-up interview from a wide establishing shot.

Full transcription with speaker attribution and diarization. Every word, linked to who said it and when.

Emotional tone, tension level, narrative role (setup, climax, resolution). Identify the dramatic beats of every episode.

Lower thirds, titles, captions, product names, data displays — all captured via OCR and linked to the scene timeline.

Equipment, props, branded items, environmental details. Every product, tool, and set element is cataloged automatically.

50+ semantic tags per scene. Tutorials, reviews, competitions, interviews, demonstrations, reveals, and more.

Auto-scored clip-worthy moments based on narrative tension and engagement signals. Auto-generated chapter breaks for navigation.

A four-stage pipeline that reads your content the way a human would — understanding context, narrative, and meaning — but at machine scale and cost.

Videos are segmented at natural scene boundaries — not arbitrary 30-second cuts. Shot detection finds real transitions using visual cuts, audio breaks, and scene changes. Each segment preserves its full narrative context: a complete demonstration, a full explanation, a complete interview exchange. Better input means higher quality metadata from the same AI models.

For the first time, AI can understand video at a cost that makes indexing entire libraries viable. The companies that act first build a data advantage that compounds with every video processed.

One multimodal AI call extracts everything — people, text, transcription, mood, objects, themes — simultaneously. 10–30x cheaper than per-feature vendor pricing. A 10,000-hour archive costs roughly what one FTE spends on manual tagging in a month.

Reels, Shorts, and TikTok algorithms favor volume and speed. Companies with searchable, scored archives will out-produce competitors who still scrub footage manually — this quarter, not someday.

Cross-reference talent across seasons. Track topic trends over years. The insights available at 10,000 hours are qualitatively different from those at 100 — and every month of delay is a month your competitors can get ahead.

Need to detect specific products? Track a new content category? Add a compliance field? Change a prompt. No retraining, no code changes, no vendor negotiation. New intelligence deploys in minutes.

Everyone will eventually index their content. But the companies that connect content attributes to audience behavior first build proprietary intelligence their competitors can't replicate — because the data is unique to your content and your audience.

Prism runs on your cloud. Metadata stays in your database. No SaaS lock-in, no data sharing with vendors. Your content intelligence is a strategic asset — it should be controlled like one.

Prism is built, deployed, and processing real content today. See what your archive looks like when every scene is searchable and every insight is connected — 30 minutes, your content.

Schedule a Demo